Organizations are facing challenges in ensuring software quality through the manual testing process. Thanks to AI, which has completely revolutionized this landscape. It automates the test case creation and data generation that earlier took a lot of time and resources. By harnessing AI in QA, firms can now focus on efficiency and innovation. The intelligent system streamlines testing workflow, drives accuracy, & limits manual mistakes.

AI tools are widely adopted by businesses throughout testing practices to detect anomalies and patterns. It can simulate a vast range of user behavior to offer comprehensive insights into software performance. Integrating AI testing services into QA practices drives software reliability, accuracy, and guarantees to deliver quality products that match user expectations.

The traditional QA process brings complications. They are time-consuming, contain errors, and are tough to scale in an agile environment. As development cycles shorten & software complexity drives, these limitations become evident. To make a shift, businesses are turning to AI testing services, which allow smart automation, enhance test coverage, and drive QA workflows.

AI testing is emerging as a highly effective QA assurance that elevates performance and leads to successful outcomes. The adoption of AI-powered test automation is increasing, and 16% of firms now leverage AI in their testing process for advanced test execution. 73% of QA testers rely on automation for functional & regression testing. Thereports show the AI market aims to reach $1,339.1 billion by 2030.

AI assurance is frequently emerging as the crucial security layer in the era of an AI-first world. The shift redefines QA from manual execution to strategic, proactive decisions. Integrating AI maintains quality at scale, drives test cycles, and allows multiple deployments on the same day. It ensures the apps are error-free, reliable & functional. The AI-driven solutions are quickly stepping into the QA landscape & taking automation to another level. In the following blog, we’ll discuss how AI testing services are redefining QA and why organizations must embrace this to stay current.

What is AI Assurance?

AI assurance is the process of validating, monitoring, and auditing AI systems to ensure they are safe, reliable, and compliant with regulations. It includes testing data, models, and operations to build trust, minimize risks, and demonstrate accountability throughout the AI system’s lifecycle. AI assurance utilizes smart algorithms to generate, automate, and manage tests, offering advanced adaptability over traditional QA. AI testing outsourcing can limit test maintenance by 85% through self-healing scripts.

AI is a tech-friendly innovation changing industries with immense benefits & potential. It influences the QA process by generating test data sets and inspects the software quality through automation. Manual testing raises the chances of errors, cost, and time. This challenge becomes more prominent when apps are developed & released across various platforms.

AI testing service provider supports overcoming these challenges & drives the testing process without human involvement. It helps to predict client behavior and track fraud activities that aren’t detected by the traditional testing practices. By approaching the right strategies, businesses can eliminate test coverage, optimize test automation, and enhance agility. To obtain these benefits, the QA team can leverage AI testing tools for better accuracy.

❋ Key Objectives of AI Assurance

■ Model reliability

Verifying model reliability is the prime goal of AI testing solutions, prioritizing consistent & accurate performance throughout various environments & data conditions. A reliable AI system must manage edge cases, rising datasets, and noisy inputs without degrading. It includes brief testing, validation, and frequent monitoring throughout the model lifecycle. Overall reliability builds trust among stakeholders and users, verifying that AI systems deliver reliable outcomes in real-world applications.

■ Bias and fairness detection

Bias & fairness detection aim for identifying and mitigating unintended discrimination within AI models. AI systems might create biased decisions on training data, causing unfair results for specific groups. AI assurance practices involve auditing datasets, determining model outputs across demographic segments, & implementing accurate metrics. Adjusting algorithms helps to limit bias, verify ethical standards, drive inclusivity, and ensure legal compliance. AI assurance aims to develop user trust and decision-making systems.

■ Transparency and explainability

These terms focus on making AI systems adoptable for users. Complex models, especially deep learning systems, make decision-making complex. AI assurance encourages the use of XAI techniques. Clear documentation supports stakeholders in understanding how decisions are made. AI assurance fosters accountability, supports debugging, and verifies that AI systems can be trusted & validated in crucial business contexts.

■ Safety and risk mitigation

It validates that the AI system operates without hampering users and the business. It involves tracking potential errors. Frequent monitoring & plans to recover further limit potential damage. By addressing risks, businesses can release AI systems. The process safeguards users, maintains system integrity, and avoids reputational damage.

■ Regulatory compliance

Regulatory compliance verifies that AI systems adhere to ethical, compliance & industry-specific standards. As the government introduces AI regulations & data safety laws, firms must align their AI practices with requirements. AI assurance involves maintaining documentation, data privacy, and integrating governance frameworks. The compliance also includes frequent audits, reporting mechanisms, and adherence to GDPR. Meeting regulatory standards avoids penalties and builds credibility and trust among stakeholders.

Why Traditional QA is Not Enough for AI Systems

❋ Probabilistic behavior instead of deterministic outcomes

Traditional QA is designed for a system where similar input always produces similar outputs. However, AI systems give output that can vary even with smaller inputs. This makes it tough to define a fixed test case & expected results. AI assurance must focus on statistical validation, confidence level & distribution-based testing. Determining performance needs involves measuring accuracy, precision, and robustness across various scenarios. These challenges make traditional QA practices insufficient.

❋ Heavy dependency on training data quality and coverage

AI systems rely mostly on the diversity, quality & transparency of training data, which is not possible in traditional software. Poor datasets can cause inaccuracy, regardless of how perfectly the code is written. Traditional QA doesn’t measure data quality. AI assurance must involve data validations and dataset audits to verify coverage of real-world scenarios. Frequent updates to training data demand ongoing validation, making data-centric testing a crucial element.

❋ Model drift and performance changes over time

AI models aren’t static, and that’s why their performance can degrade over time because of changes in real-world data. Traditional QA assumes stable system behavior after release. Frequent monitoring and performance evaluation are necessary to manage accuracy. Without these practices, models can become outdated. These complications signify why traditional QA practices aren’t enough to ensure long-term system effectiveness.

❋ Bias and fairness risks in AI decision-making

AI solutions might introduce biases available in training data, which can cause biased decisions. Traditional QA prioritizes functional accuracy rather than ethical implications, making it inadequate to determine risks. AI assurance demands fair testing across various demographic groups, bias detection techniques & mitigation practices. It includes determining model outputs to ensure accuracy. Addressing bias is necessary for maintaining regulatory compliance and managing trust in AI-driven decisions.

❋ Limited explainability and transparency in AI models

Most of the AI models rely on deep learning systems and lack transparency in how they generate outputs. Traditional QA doesn’t address the requirement for explainability. AI assurance should incorporate explainable AI techniques and clear documentation for decision-making. It is necessary for debugging, auditing, and regulatory compliance. Without transparency, it is tough to validate AI systems. This is how AI models reinforce the limitations of traditional QA approaches.

Also Read: Top 10 Security Testing Companies in India (Edition 2026)

Core Components That Define AI Assurance Foundations

❋ Data Assurance

Data assurance prioritizes verifying the integrity, quality, and data reliability of AI systems. Before model training and releasing, the data should be verified to measure its consistency and accuracy. Detecting and minimizing data bias is crucial to avoiding biased results. Data assurance analysis guarantees that different situations and edge cases are involved.

Data assurance tracking permits checking the source of data. Through this, businesses can track how data changed and how it was used across their pipeline. Strong data assurance policies encourage the development of trustworthy AI systems. It minimizes mistakes caused by incorrect datasets.

❋ Model Assurance

Model assurance guarantees that AI models operate accurately and consistently under a variety of scenarios. It briefs assessing accuracy using relevant measures and validates outcomes across several datasets. Robust testing examines how models handle abnormalities and unexpected inputs. Model testing uncovers flaws by subjecting models to deliberately changing data.

The following approach tracks variations in model performance over time owing to changing data patterns. Together, these techniques ensure that models stay stable, secure & effective across the journey. It lowers the risk of performance degradation and provides reliable AI-driven decision-making in real-world scenarios.

❋ Ethical AI Testing

Ethical AI testing solutions guarantee that AI systems perform fairly. It uses bias testing and accuracy evaluation to detect and reduce discrimination in model outputs. Organizations can use advanced AI testing tools to systematically examine ethical gaps and ensure regulatory compliance. Responsible AI validation aims to connect models with ethical concepts. Human involvement is critical for examining automated judgments and addressing edge cases. These standards contribute to the development of trustworthy AI systems.

❋ Operational Assurance

It aims to verify that AI systems are reliable in the real world. It covers frequent monitoring of AI models to detect gaps. Performance tracking verifies that models adhere to regulations. Hiring experts to implement advanced techniques enables the rapid detection and resolution of problems that impact user experience. Continuous validation guarantees that models remain accurate with the changes in data. Together, these techniques help AI systems run smoothly. Furthermore, it reduces downtime. It allows AI systems to deliver consistent value while adjusting to changing business conditions.

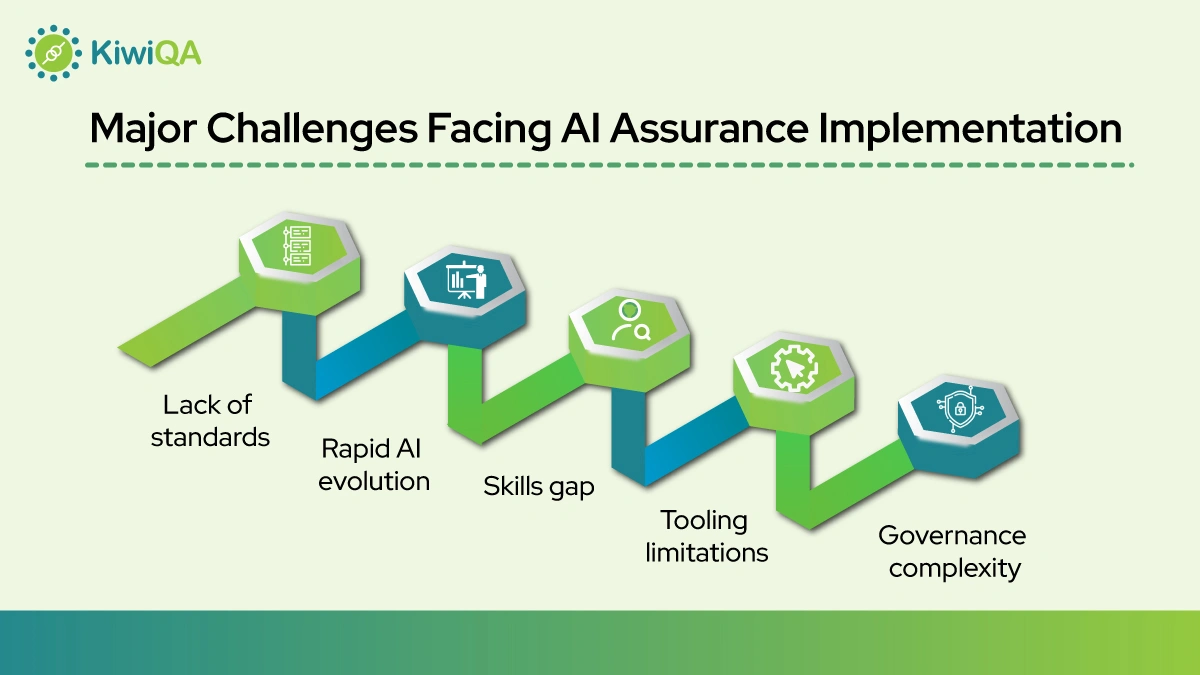

Major Challenges Facing AI Assurance Implementation

❋ Lack of standards

The prime challenge in AI assurance is the absence of standards. Unlike traditional software testing, AI lacks consistent guidelines for risk evaluation and validation. Firms often depend on evolving best practices, which lead to inconsistent implementation. This makes benchmarking performance & ensures compliance tough across industries. Without best practices, it becomes tough to compare systems and build trust in AI solutions. It slows down the adoption of reliable & robust AI assurance practices.

❋ Rapid AI evolution

The rapid adoption of AI technology poses a constant challenge for assurance practice. Advanced models, techniques & tools emerge frequently, making it tough for businesses to keep testing frameworks & validation upgrades. The rapid evolution demands frequent learning, investment, and the courage to adopt advanced capabilities. The AI assurance team must be aligned with innovations while managing system reliability. It adds complexities, especially for businesses trying to scale AI.

❋ Skills gap

The rapid barrier to effective AI assurance is the lack of skilled staff having expertise in both AI and QA. Integrating robust AI testing solutions demands knowledge of ML and data science. Most of the firm struggles to search for talent capable of managing these multidisciplinary demands. This skill gap leads to inadequate testing, overlooked risks, and slows down the adoption of assurance practices. Upskilling the existing team & investing in specialized training programs are necessary to bridge the gap and verify effective AI system validation.

❋ Tooling limitations

Current testing tools are not leveraged to manage the complexity of AI systems. Traditional QA tools are designed for deterministic software & might not support bias. However, the advanced AI-specific tools are emerging, evolving, and may lack scalability or integration capabilities. It creates challenges in implementing end-to-end AI assurance. Businesses often depend on custom solutions, which increases the complexity and effort required to achieve comprehensive testing & validation.

❋ Governance complexity

AI governance includes managing policies, ethical considerations & operational controls, making it complex. Firms must comply with rising regulatory frameworks while managing transparency in AI systems. Coordinating across various stakeholders, covering the legal and technical teams, adds challenges. Establishing clear ownership, documentation, and an audit process is crucial but tough to implement. Without strong governance structures, firms risk non-compliance, ethical errors & minimize trust, making governance a key challenge in AI assurance implementations.

Also Read: Automation Testing Services Every CTO Should Consider in 2026

How QA Engineers Are Adapting to the Rise of AI Technology

❋ How QA Roles Are Evolving

■ From testers to AI assurance engineers

The QA roles are rapidly rising, and that’s why the QA testers are improving their skill sets by understanding AI assurance. Their new job role includes evaluating models, data quality, and ethical gaps. Their function is increasingly relying on frequent validation and monitoring. Their job role is to align AI outputs with business and regulatory objectives.

■ Collaboration with data scientists

QA workers collaborate with data scientists to validate models. They validate the datasets across the AI lifecycle. This collaboration ensures improved alignment of model development along with testing methodologies. QA engineers contribute to developing test scenarios and detecting errors initially. This collaboration increases the quality of outcomes. The team guarantees that AI systems fulfill technical and organizational needs.

■ Understanding ML pipelines

Modern QA engineers are obtaining a thorough understanding of ML processes. This understanding enables them to identify potential areas of mistakes and develop appropriate validation procedures. Understanding the ML workflow allows QA experts to verify consistency and performance.

❋ New Skills for AI Assurance

■ Machine learning basics

QA engineers are gaining new skills in fundamental ML to create better test cases and understand model outputs effectively. They are looking to get knowledge on how models learn and anticipate. It allows QA experts to discover flaws earlier. It helps them to validate results properly and release an accurate AI system.

■ Data analysis

Data analysis abilities are becoming important for QA engineers working with AI systems. Prior to testing models, they must assess datasets for stability and performance. By analyzing trends and distributions, the QA team can detect potential errors.

■ Statistical thinking

Statistical thinking enables QA engineers to analyze AI systems using advanced methods. They use statistical parameters to evaluate model performance. This approach helps in describing errors, confirming results across datasets, and making judgments.

■ Ethics and governance

QA engineers are increasingly responsible for verifying that AI systems adhere to ethical and regulatory guidelines. They help to build governance frameworks by documenting processes, verifying compliance, and providing support for audits.

■ Monitoring and observability

This skill allows QA engineers to track AI system performance in real time. They utilize tools and measurements to identify abnormalities and performance gaps. Continuous monitoring ensures that problems are detected and addressed in the best manner.

Ready to Embrace AI Assurance? Shape the Future of QA

The rise of AI allows testing software products in various environments & scenarios. It allows the QA team to determine and resolve issues efficiently. The future of QA engineering is bright as AI plays a strong role in transforming the testing process. Since the future of QA is AI-powered, businesses must adopt these practices.

Organizations that embrace AI consulting services for QA will get better positioning in a tech-driven landscape. With the improving demand for speed, scalability, and accuracy, traditional testing practices failed to keep pace. By leveraging AI testing processes and adopting AI-powered QA solutions, companies can streamline QA, limit release cycles, and drive software reliability.

Implementing AI practices in QA enhances the ability to generate & execute test cases. Leveraging AI advisory services for QA for QA testing enables the development of accurate testing protocols. As adoption of AI will grow, it will redefine the QA standards, ensure seamless performance & user satisfaction.